Whether pain in the back, shoulders or knees: Incorrect posture in the workplace can have consequences. A sensor system developed by researchers at the German Research Centre for Artificial Intelligence (DFKI) and TU Kaiserslautern might be of help. Sensors on the arms, legs and back, for example, detect movement sequences and software evaluates the data obtained. The system provides the user with direct feedback via a Smartwatch so that he can correct movement or posture. The sensors could be installed in working clothes and shoes. The researchers have presented this technology at the medical technology trade fair Medica held from November 15th to 18th, 2021 at the Rhineland-Palatinate research stand (hall 3, stand E80).

Assembling components in a bent posture, regularly putting away heavy crates on shelves or quickly writing an e-mail to a colleague on the computer – during work most people do not pay attention to an ergonomically sensible posture or a gentle sequence of movements. This can result in back pain that may well occur several times a month or week and develop into chronic pain over time. However, incorrect posture can also lead to permanent pain in the hips, neck or knees.

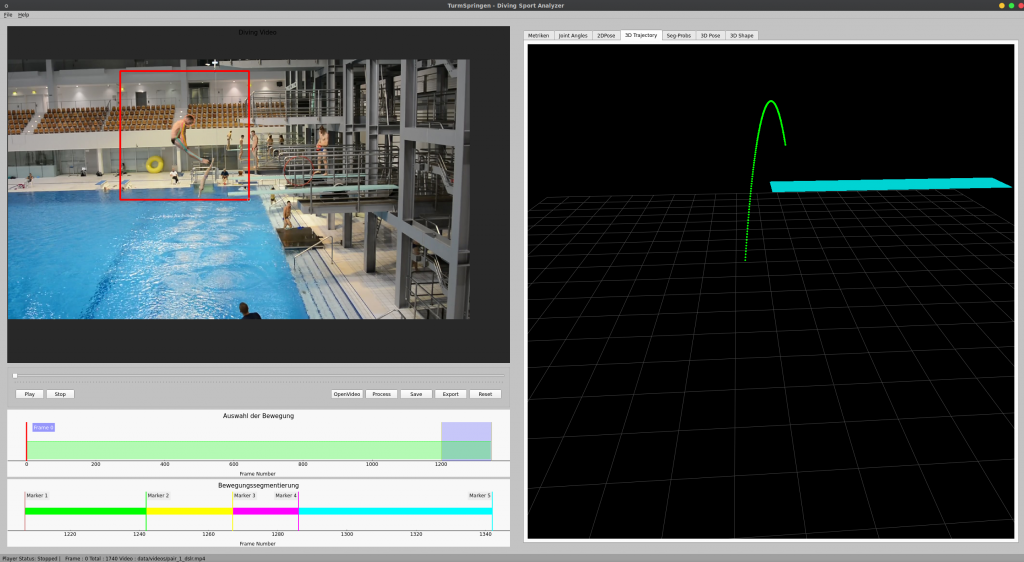

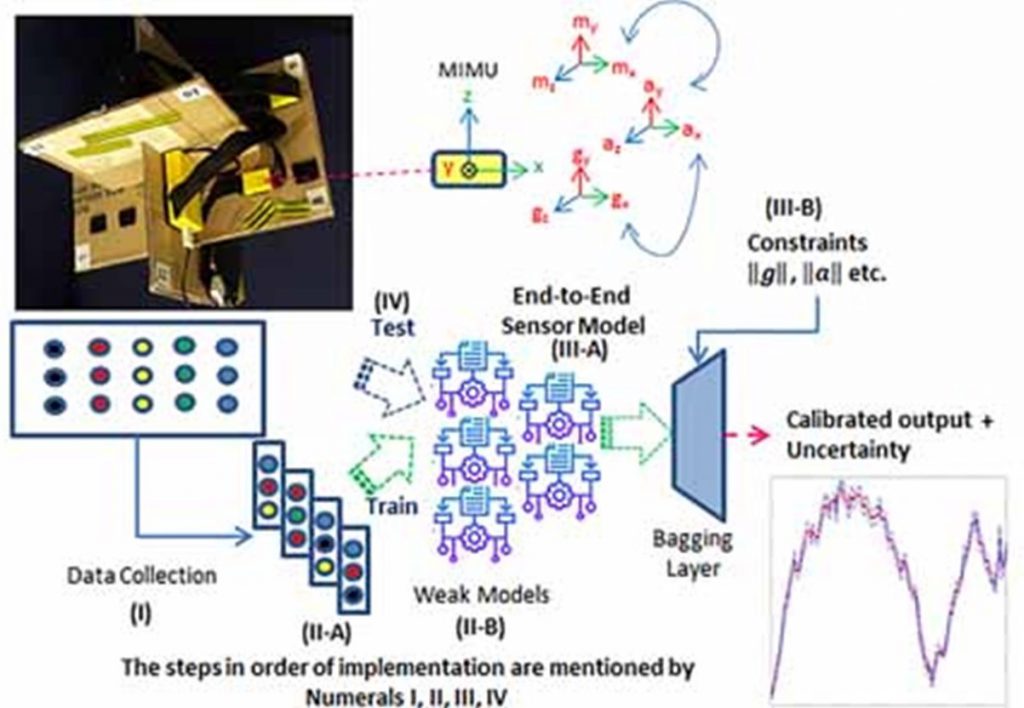

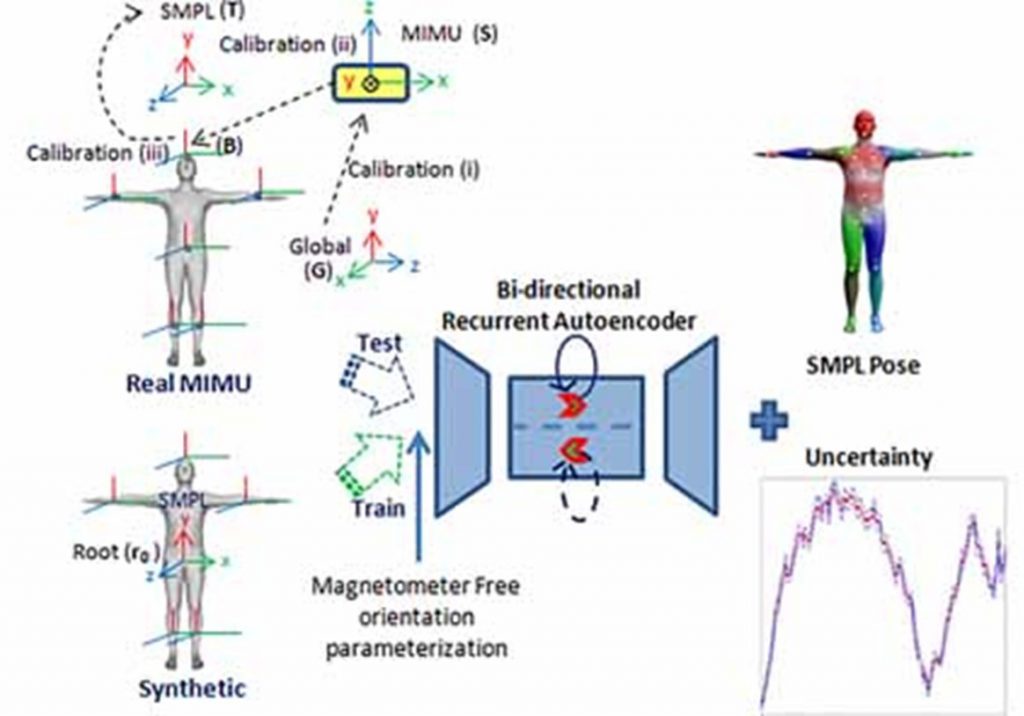

A technology currently being developed by a research team at DFKI and Technische Universität Kaiserslautern (TUK) can provide a remedy in the future. Sensors are used that are simply attached to different parts of the body such as arms, spine and legs. “Among other things, they measure accelerations and so-called angular velocities. The data obtained is then processed by our software,” says Markus Miezal from the wearHEALTH working group at TUK. On this basis, the software calculates motion parameters such as joint angles at arm and knee or the degree of flexion or twisting of the spine. “The technology immediately recognizes if a movement is performed incorrectly or if an incorrect posture is adopted,” continues his colleague Mathias Musahl from the Augmented Vision/Extended Reality research unit at the DFKI.

The Smartwatch is designed to inform the user directly in order to correct his movement or posture. Among other things, the researchers plan to install the sensors in work clothing and shoes. This technology is interesting, for example, for companies in industry, but it can also help to pay more attention to one’s own body in everyday office life at a desk.

All of this is part of the BIONIC project, which is funded by the European Union. BIONIC stands for “Personalized Body Sensor Networks with Built-In Intelligence for Real-Time Risk Assessment and Coaching of Ageing workers, in all types of working and living environments”. It is coordinated by Professor Didier Stricker, head of the Augmented Vision/Extended Reality research area at DFKI. The aim is to develop a sensor system with which incorrect posture and other stresses at the workplace can be reduced.

In addition to the DFKI and the TUK, the following are involved in the project: the Federal Institute for Occupational Safety and Health (BAuA) in Dortmund, the Spanish Instituto de Biomechanica de Valencia, the Fundación Laboral de la Construcción, also in Spain, the Roessingh Research and Development Centre at the University of Twente in the Netherlands, the Systems Security Lab at the Greek University of Piraeus, Interactive Wear GmbH in Munich, Hypercliq IKE in Greece, ACCIONA Construcción S.A. in Spain and Rolls-Royce Power Systems AG in Friedrichshafen.

Further information:

Website BIONIC

Video

Contact: Markus Miezal, Dipl.-Ing. Mathias Musahl

Related news: 02/26/2019 Launch of new EU project –“BIONIC,” an intelligent sensor network designed to reduce the physical demands at the workplace