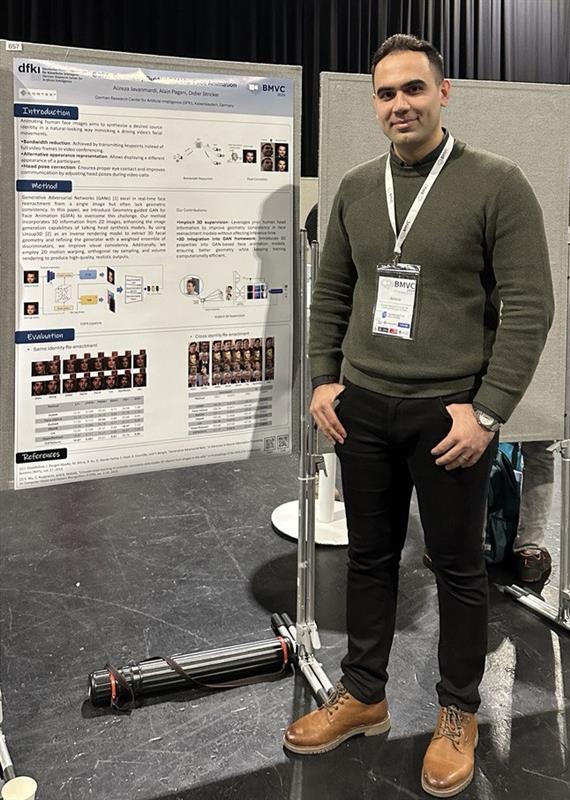

On December 19th, 2024, Jilliam Díaz Barros successfully defended her doctoral thesis entitled ‘Optimization and Generative Models for Face Analysis’. The PhD thesis was carried out in the Augmented Vision department at DFKI, led by Prof. Dr. Didier Stricker, as part of the Computer Science department at RPTU.

The PhD examination commission consisted of Prof. Dr. Didier Stricker (RPTU) and Prof. Dr. Luís Gonzaga Mendes Magalhães (University of Minho) and was chaired by Prof. Dr. Annette Bieniusa (RPTU).

In her thesis, Jilliam Díaz investigated the modelling of the rigid and non-rigid motions of the head, targeting existing challenges in assistance tools and assistive technologies. The PhD thesis focused on four main areas of contributions: head pose estimation, performance-driven facial animation, facial landmark detection and tracking, and face and upper body image synthesis.

Jilliam Díaz received her Bachelor degree in Electronics Engineering from Universidad del Norte, Colombia and her M.Sc. in Computer Science and Electronics from the Université de Bourgogne, France. Besides being part of the Augmented Vision department, Ms. Díaz Barros has been working as software engineer at ANNA Healthcare Saarland UG.

A great big congratulations on receiving your PhD and we wish you all the best for the future!