In the frame of a research cooperation, DFKI’s Augmented Vision Department and BMW are working jointly on Augmented Reality for In-Car applications. Ahmet Firintepe, a BMW research PhD under the supervision of Dr. Alain Pagani and Prof. Didier Stricker has recently published two papers on outside-in head and glass pose estimation:

Ahmet Firintepe, Alain Pagani and Didier Stricker:

“A Comparison of Single and Multi-View IR image-based AR Glasses Pose Estimation Approaches”

Proc. of the IEEE Virtual Reality conference – Posters. IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops (VRW) (IEEEVR-2021)

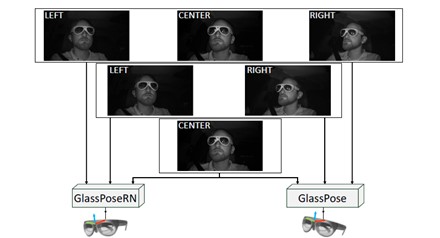

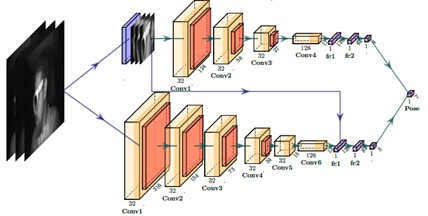

In this paper, we present a study on single and multi-view image-based AR glasses pose estimation with two novel methods. The first approach is named GlassPose and is a VGG-based network. The second approach GlassPoseRN is based on ResNet18. We train and evaluate the two custom developed glasses pose estimation networks with one, two and three input images on the HMDPose dataset. We achieve errors as low as 0.10 degrees and 0.90 mm on average on all axes for orientation and translation. For both networks, we observe minimal improvements in position estimation with more input views.

Ahmet Firintepe, Carolin Vey, Stylianos Asteriadis, Alain Pagani, Didier Stricker:

“From IR Images to Point Clouds to Pose: Point Cloud-Based AR Glasses Pose Estimation”

In: Journal of Imaging 7 80 Seiten 1-18 MDPI 4/2021.

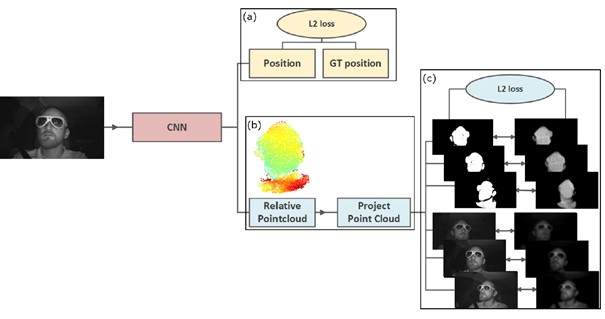

In this paper, we propose two novel AR glasses pose estimation algorithms from single infrared images by using 3D point clouds as an intermediate representation. Our first approach “PointsToRotation” is based on a Deep Neural Network alone, whereas our second approach “PointsToPose” is a hybrid model combining Deep Learning and a voting-based mechanism. Our methods utilize a point cloud estimator, which we trained on multi-view infrared images in a semisupervised manner, generating point clouds based on one image only. We generate a point cloud dataset with our point cloud estimator using the HMDPose dataset, consisting of multi-view infrared images of various AR glasses with the corresponding 6-DoF poses. In comparison to another point cloud-based 6-DoF pose estimation named CloudPose, we achieve an error reduction of around 50%. Compared to a state-of-the-art image-based method, we reduce the pose estimation error by around 96%.