We are proud that our paper “RPSRNet: End-to-End Trainable Rigid Point Set Registration Network using Barnes-Hut 2^D-Tree Representation” has been accepted for publication at the Computer Vision Pattern Recognition (CVPR) 2021 Conference, which will take place virtually online from June 19th to 25th. CVPR is the premier annual computer vision conference. Our paper was accepted from ~12000 submissions as one of 23.4% (acceptance rate: 23.4%).

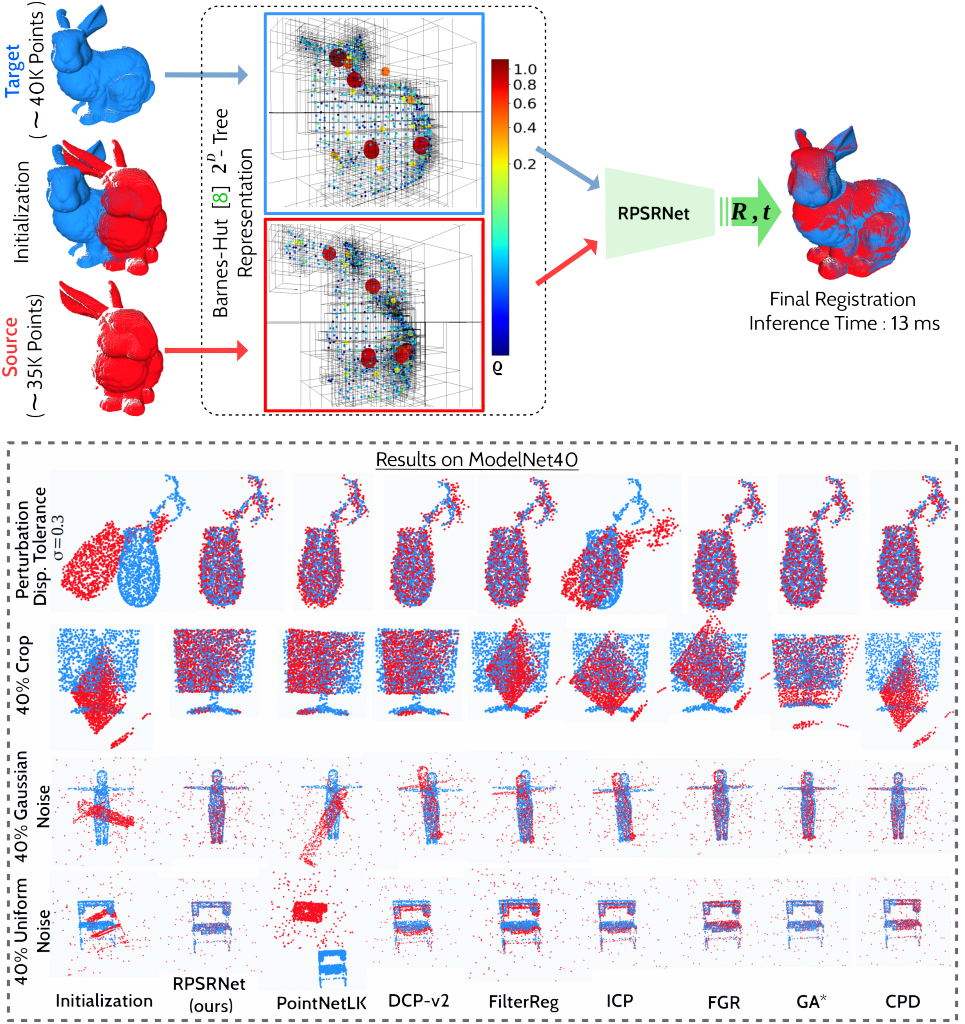

Abstract: We propose RPSRNet – a novel end-to-end trainable deep neural network for rigid point set registration. For this task, we use a novel 2^D-tree representation for the input point sets and a hierarchical deep feature embedding in the neural network. An iterative transformation refinement module of our network boosts the feature matching accuracy in the intermediate stages. We achieve an inference speed of ~12-15$\,$ms to register a pair of input point clouds as large as ~250K. Extensive evaluations on (i) KITTI LiDAR-odometry and (ii) ModelNet-40 datasets show that our method outperforms prior state-of-the-art methods – e.g., on the KITTI dataset, DCP-v2 by 1.3 and 1.5 times, and PointNetLK by 1.8 and 1.9 times better rotational and translational accuracy respectively. Evaluation on ModelNet40 shows that RPSRNet is more robust than other benchmark methods when the samples contain a significant amount of noise and disturbance. RPSRNet accurately registers point clouds with non-uniform sampling densities, e.g., LiDAR data, which cannot be processed by many existing deep-learning-based registration methods.

Representation — The center-of-masses (CoMs) and point-densities of

non-empty tree-nodes are computed for the respective BH-trees of the

source and target. These two attributes are input to our RPSRNet which

predicts rigid transformation from the global feature-embedding of the

tree-nodes.”

Authors: Sk Aziz Ali, Kerem Kahraman, Gerd Reis, Didier Stricker

To view the paper, please click here.